Measuring Time-to-Trust

When customers stop checking your work is when you win

A few weeks ago I wrote about how, in today’s AI era, customers aren’t just looking for the product you build. They’re looking for the judgment you bring. Whether you’re an FDX embedded with a customer, an engineer on-site, or a founder doing the first sales yourself, the goal is the same: build enough trust fast enough that the customer is willing to bet their operation on you.

The real sales metric might be time-to-trust: the moment a customer stops checking your work.

Because every company can point to a failed AI pilot or a tool that promised the world and never integrated. And if there is even a chance a buyer thinks they can vibecode their way to a solution over a weekend, you’re done. Proving ROI and short time-to-value are necessary but not sufficient.

The question now isn’t “Does your product work?” It’s “Do I trust you enough to bet my operation on this?”

How fast you can get there determines whether you close or die in pilot purgatory.

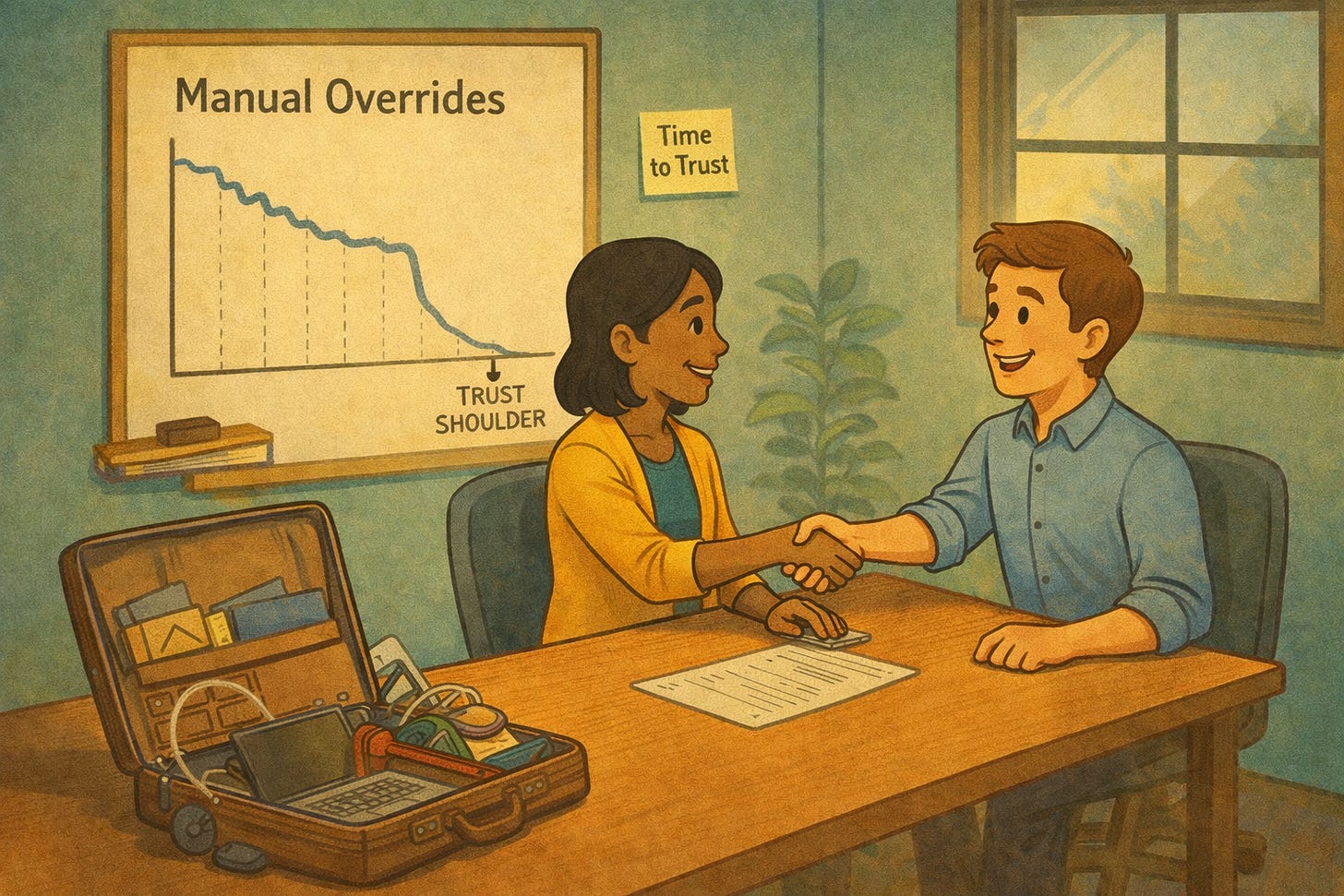

Find the Shoulder

So how do you know when a customer trusts the product?

Prior to going full time with Garuda, I was an early employee at Legion, where we built an AI-powered workforce scheduling platform for hourly workers (mind you, this was in 2019, before today’s AI wave). When we launched with a new customer, we could watch the same pattern play out every time.

Store managers would receive the AI-generated schedule and tweak it. Swap a shift here, adjust a pairing there, override a timing they didn’t love. The model would quietly learn from every edit and incorporate their preferences into the following week’s schedule. The next week would come around, more edits, and the cycle continued.

And then at some point the edits fell off a cliff. Every time.

This is what I called the trust shoulder. The point where the manager stopped overriding the AI and started relying on it.

Or, more broadly, the moment where the behavior shifts from checking your work to trusting it. And then spending less time in the product.

In the agentic era, trust can actually mean customers using your tool less, not more.

If your AI is handling workflows autonomously, the signal isn’t DAUs or session length. The signal is they stopped logging in to supervise. The old SaaS playbook optimized for usage and engagement. The new one needs to optimize for confident delegation.

Pack Your Suitcase

Many companies solve this by showing up.

One of our Garuda portfolio company saw a 4-5x increase in ACV once they started placing engineers on-site with customers, compared to quarters where they relied on remote sales and CS-assisted demos (and in one case went from $300K to $1M!)

Another built their version of the Iron Man suitcase: travel on-site, spend a week in the prospect’s building, show the product working on their data, and at the end of the week there’s either ROI or there isn’t. No six-month pilot.

One week, go/no-go. Because it is harder to push someone off when they’re sitting with you in the company cafeteria. And in a post-COVID world where founders got comfortable selling over Zoom, the willingness to show up sets you apart more than most people realize.

People buy from People

One CIO told me recently that he now requires an on-site visit before signing any AI vendor. Not to evaluate the product. To make sure he’s buying from a real person and the face he’s seen on the Zoom isn’t a bot! I expect this to be a common ask going forward.

Because until AI is buying from AI, people still want to buy from people. And the faster they stop checking your work, the faster you’ll earn their business. Whatever your product does, there’s a version of trust signal in your usage data. Find the behavior that shows a customer has stopped second-guessing you, and track it like you track revenue. And shorten the time to trust as much as possible.